|

| CSF advances the ball, but not enough to matter, especially considering the effort. |

This may have worked for Woody at Ohio State, but it's a poor model for progress in cyber security. The "pace of the game" is governed by the clock speed of innovation on the part of threat agents, not by us defenders. Plus, most organizations aren't sitting at "3rd down and 3 yds to go" -- more like "3rd down and 33 yds to go". "Three yards and a cloud of dust" is ultimately a failing strategy because it leaves us perpetually behind the minimum threshold of acceptable performance.

The One-Minute Cyber Security Framework (CSF)

Here's a super-short summary of the CSF in case you haven't read it all. The CSF is a collection of practices drawn from existing standards and frameworks, organized into five buckets. Within each bucket there are three to five categories, and within each category are between one and ten "practices" that look like this:"Identify vulnerabilities to organizational assets (both internal and external)"For each practice, there between one and five references to existing standards and frameworks -- COBIT, NIST 800-53 and others, ISO/IEC 27001, CCS (CSC), and ISA 99.02.01.

A diagram is offered to explain "decision and information flows" during CSF implementation, with two loops (click to see larger version). Notice that this is a hierarchical, command-and-control model of implementation. Managers and Senior Executives do the thinking, while everyone else does implementation.

Four "tiers" of implementation are proposed -- zero through three. These are basically capability maturity levels. They propose that you can be higher in maturity in some buckets and lower in others, an this variation is your "profile". Implementation follows a generic operations improvement structure:

- Executives decide on overall objectives

- Executives decide on target profile

- Managers assess current profile

- Compare target to current profile, and identify gap

- Implement plans to close the gap

Acknowledged "Areas for Improvement"

The draft writers acknowledge the following short-comings in the CSF that need improvement:

- Authentication

- Automated Indicator Sharing

- Conformity Assessment

- Data Analytics

- International Aspects, Impacts, and Alignment

- Privacy

- Supply Chains and Interdependencies

There you have it -- the NIST CSF in one minute.

Capability maturity models are good and useful. Crawl before you walk, and walk before you run.

That's what is good about the CSF. And now here's what's I think is wrong with it...

At a superficial level, the CSF adds very little value if it simply organizes and cross-references existing standards and frameworks. Anyone with half a brain and an internet connection can collect them, read them, and make sense of them.

But the problem with focusing on "practices" runs deeper. The biggest flaw with the CSF and similar frameworks is the mistaken notion that the only thing that differentiates poor performers from adequate or good performers is some list of practices.

I'll explain this in the Universal Language of Executives -- golf.

In this analogy, "golf scores" are like cyber security performance over time, "golf swings" are like cyber security performance in any specific instance or setting, and "golf tips" are like specific practices, "best" or otherwise.

Anyone who has taken up golf seriously knows someone who has too many golf tips swirling around in his/her head. In fact, most serious golfers have suffered from this at one time or another. For non-golfers, the tips look like these:

Consider this list of "best practices" as an alternative in the CSF:

What if there were identical two companies: "A" that implemented the CSF and "B" that implemented these three practices? Which would you bet on for having better cyber security performance, A or B? Myself, I'd bet on B, because B would pick up what ever practices it needed and would do a much better job implementing them, integrating them, tuning them, continuously innovating, and delivering performance.

What's Good About It

Good practices are good. Best practices, if you can identify them with evidence and critical tests, are better. Giving people references back to existing standards and frameworks is helpful because it will save them search time.Capability maturity models are good and useful. Crawl before you walk, and walk before you run.

That's what is good about the CSF. And now here's what's I think is wrong with it...

What's Wrong #1: "Best Practices" Is the Wrong Focus

In a previous post, I explained why I think cyber security is not just a pile of "best practices". I recognize that the NIST folks believe they are constrained by the Executive Order, but they (and industry participants) should have pushed back and put the emphasis on performance and evidence of effectiveness instead.At a superficial level, the CSF adds very little value if it simply organizes and cross-references existing standards and frameworks. Anyone with half a brain and an internet connection can collect them, read them, and make sense of them.

But the problem with focusing on "practices" runs deeper. The biggest flaw with the CSF and similar frameworks is the mistaken notion that the only thing that differentiates poor performers from adequate or good performers is some list of practices.

I'll explain this in the Universal Language of Executives -- golf.

"Best Practices" are Like Golf Tips; and Golf Tips Alone Don't Make Good Golfers

|

| Too many tips lead to crappy swings and crappy scores. Likewise with lists of "best practices". |

Anyone who has taken up golf seriously knows someone who has too many golf tips swirling around in his/her head. In fact, most serious golfers have suffered from this at one time or another. For non-golfers, the tips look like these:

- "Keep your head down and still"

- "Turn around your spine"

- "Grip the club lightly"

- "Don't sway your hips"

- "Swing on one plane"

- "Swing on two planes"

- "Grip it and rip it!!"

- "Shake hands with the target"

- (and so on)

Golf magazines traffic tips. So do books, TV shows, and instructors. Golfers pass tips to each other, especially if one seems to be hitting particularly well -- because he/she might have "The Secret". Once a golfer starts to think about their swing this way, and starts to think that it is the key to good scoring, it's hard to stop. Tips pile on tips.

The sad reality is that accumulating golf tips can never, ever make you a good golfer, for two reasons. (These reasons map back to cyber security performance... trust me!)

First, focus on tips puts a golfer too much "in their head" -- i.e. a verbal, methodical, self-conscious state of mind. This works against the brain-and-body systems that actually facilitate a good golf swing and good golf scoring capabilities -- i.e. the "natural athlete" in us all that learned to walk and can throw and catch a ball. The "natural athlete" can be summarized in the pithy phrase: "see it, feel it, do it". This is essentially a dynamic cycle of situation assessment and execution. Extending this over many shots in a round, playing the game of golf is an iterative cycle of "see it, feel it, do it", adjusting to the changing opportunities, risks, and rewards offered by each shot. This is roughly analogous to the Double Loop Learning that I'm proposing in the Ten Dimensions of Cyber Security Performance. Focusing on some fixed set of tips keeps you in a static frame of mind and not attuned to the changing circumstances of each shot.

Second, each golfer's "ideal swing" will not be composed of some list of "tips". Many tips conflict with each other. Every person's body is different, and so tips that apply to one may not apply to another. Some of the best golfers of all time had quirks in their swing and, thus, violated several or even many tips that are widely advocated. Each golfer must find their "ideal swing" through purposeful practice, through instruction from a competent teacher, and feedback from real experience, including on the course. Ultimately, what counts is that a golfer has a swing that they have confidence in, they can repeat under pressure, and enable them to score (i.e. perform).

This process of building the "ideal golf swing" for a golfer is analogous to building a high performing cyber security program in a specific organization. The "tips" are incidental and ultimately not the most important factor. What counts is a framework that emphasizes dynamic performance and the work necessary to develop it gradually over time. In other words, let me say this emphatically:

- "Best practices" are not a substitute for management thinking and work, nor a substitute for professional thinking and work.

Consider this list of "best practices" as an alternative in the CSF:

- Hire good, capable people, including managers and executives

- Manage them well, focusing on what's most important, and balancing short-term with long-term, and risk-taking with tried-and-true

- Reward them for performance, and generally align incentives to desired outcomes

What's Wrong #2: Evidence of Effectiveness Is Lacking

In public statements, including Congressional testimony, the NIST folks are proclaiming that the CSF is "industry-led", that there is "excellent participation" from all quarters, and that there is "wide consensus" on the CSF as it is taking shape. These statements are less about the facts of the process than they are to give political and cultural legitimacy to the process and, especially, the CSF outcomes. Essentially, these are all substitutes for any substantive evidence that any of these practices actually improve cyber security performance.

Evidence of effectiveness was the focus of my initial submission to NIST. My recommendation then and now is that all of us, government and industry, would be way better off devoting our limited resources to evaluating alternative practices to see which is more effective, in what circumstances, and why. I'd much rather see two dozen pilot programs and experiments testing various practices than I would see two dozen practices being implemented with no evidence behind them, chosen only because they got "anointed" in some process of meetings with mixtures of random participants.

It's not the practices themselves that are missing. What's more important is the missing understanding and learning about what drives effective cyber security. Focusing attention on gathering evidence of effectiveness will dramatically improve understanding and learning, both Single Loop and Double Loop. Put it another way, once you have the learning loops in place and working, it's a no-brainer to design and implement appropriate practices. But the reverse is not true at all. If you just implement a list of practices, it's almost guaranteed that the learning loops will not develop. Instead what you see is "going through the motions", at best "fulfilling the letter but not the spirit" of the practices.

What's Wrong #3: Give a Man a Fish...

The full expression goes: "Give a man a fish, and you'll feed him for a day. Teach him to fish and you'll feed him for a life time." In this analogy, the "fish" are practices, and the "man" is any company or organization, but mostly laggard organizations that have poor cyber security programs in place.

Being a static list of practices, the CSF encourages people to think that all they have to do to achieve good cyber security is to implement the practices to some executive-defined "tier" or level of capability maturity, then they are done. But this is equivalent to getting and eating one fish.

Moving away from the metaphor, I'll explain the problem more directly with a series of charts and images. The following draws on ideas and presentations from Josh Corman (@joshcorman) and Alex Hutton (@alexhutton), among others. This is part of the presentation I gave at BSidesLA in August (slides).

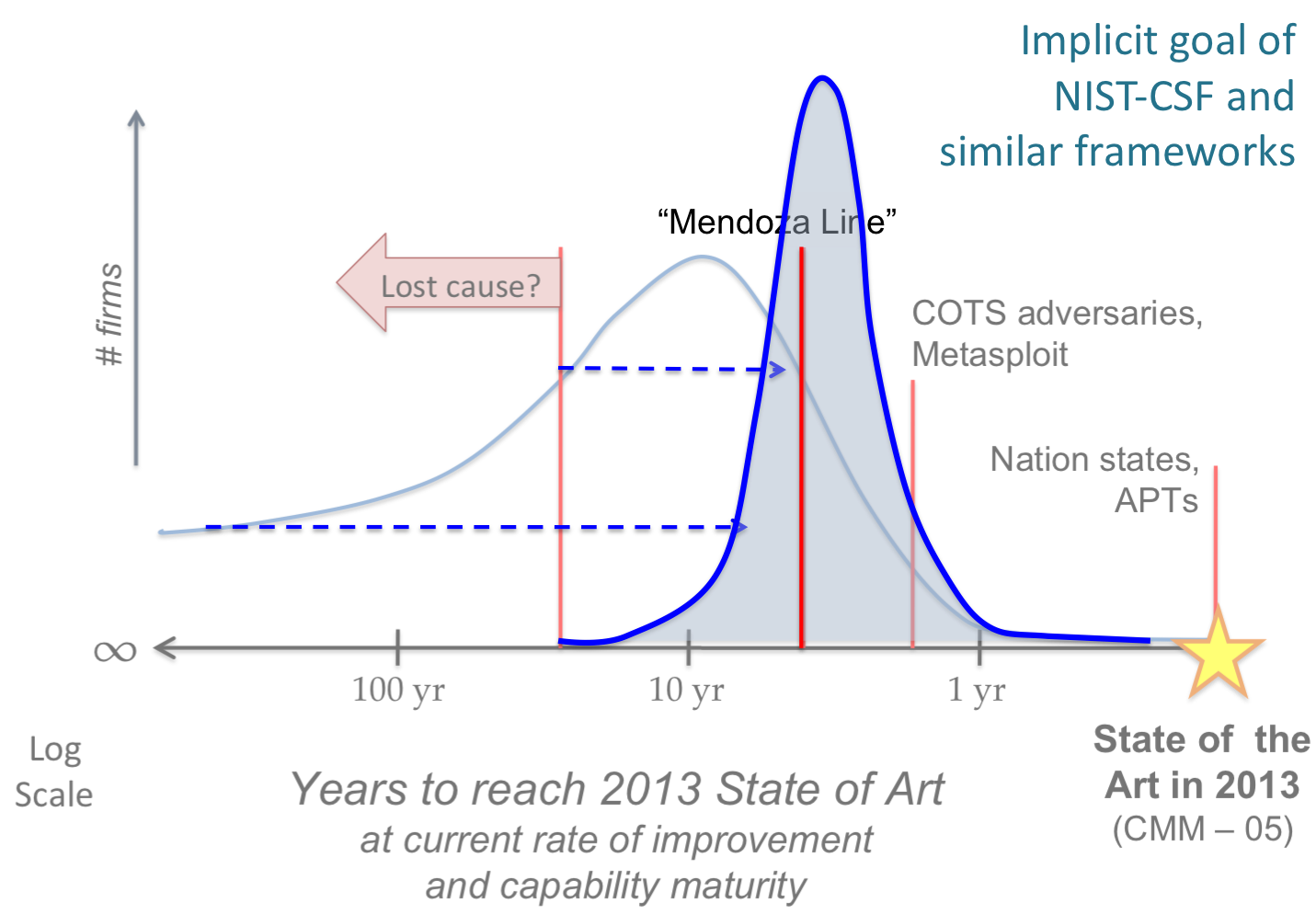

Let's define the "State of the Art - 2013" as the very best of cyber security performance today, how ever you want to define it (e.g. "CMM-05: Capability Maturity Model level 5"). Maybe it's found in a several companies, or a single organization, or in a composite of organizations.

Now let's consider how long it will take all other organizations, at their current rate of learning and improvement, to reach this "State of the Art - 2013". The chart below is my rough estimate of what the histogram might look like, with buckets for each year behind the state of the art.

The point of this chart is simple -- almost no organizations are at the state of the art, and very very few are even close. The bulk of organizations, large and small, is lagging the state of the art by a large margin.

Now let's consider threat agents and their equivalent of "State of the Art - 2013". This might be defined by nation-state attackers and maybe a few select elite criminal networks. The chart below is my rough estimate of the histogram of the attacker population in terms of how long it will take them to catch up with the current state of the art.

There are three simple points to this slide. First, there are quite a few adversaries that are at or very close to the state of the art, many more than defenders. (For the moment, let's leave aside the NSA as threat actor. I want to focus on adversaries that most commercial and public sector organizations worry about.) Second, the mass of adversaries are not very far from the state of the art, thanks to various Commercial Off-The-Shelf (COTS) solutions and services, including the famous Metasploit tool. The key feature of these solutions and services is that they advance rapidly each year in capability, not just in exploit/attack capabilities but in the full range of capabilities that adversaries might need (e.g. monetization). (This rate of innovation has been called "H.D. Moore's Law" by Josh Corman, named after the inventor and chief engineer of Metasploit.) Essentially, the mass of adversaries are being carried along an innovation trajectory at a brisk pace because they are riding on a vibrant and healthy innovation ecosystem. The same cannot be said for defenders.

The third point is that their is a trailing group of attackers that go after only the "low hanging fruit" among defenders. They only put in enough effort to keep up with the bare minimum level of cyber security that a random organization might achieve. This bare minimum line has been dubbed the "Mendoza Line" by Alex Hutton, after a professional baseball player who, infamously, set a standard for playing in the major leagues a long time with a very low batting average (~.200). Below the Mendoza Line, no ballplayer can hope to say in the Major Leagues, no matter how good his fielding skills are.

Now let's put these two charts together, but this time let's use a log scale for time, so we can see orders of magnitude. The blue line is a continuous (smoothed) version of the histogram from the first chart, above.

Here's where the analysis gets controversial because I'm doing some reasoned guessing. Many readers might have different conjectures or guesses. I'm not sure anyone has data to calibrate this, but I'm open to other opinions and guesses.

There are three messages of this chart. First, a large majority of defender organizations are behind the "Metasploit Line", which means that they aren't keeping up with the majority of adversaries. Second, I'd guess that more than half of organizations -- large, medium, and small -- fall below the "Mendoza Line" of bare minimum cyber security. Third, I'd guess that there are a large number of organizations that are so stuck in dysfunction and denial that they aren't making any progress at all toward the "State of the Art - 2013". In other words, at the current rate of improvement and learning, they will never get there. In the BSidesLA presentation, I have a funny GIF and PPTX animation to go along with this, but I haven't yet converted it to a movie yet, so I'll just show four stills. Hopefully, you get the humor and message. (Shout out to @heidishey for stimulating this visual metaphor. Click to see larger version.)

Are they a "Lost Cause"? God, we hope not because if so, we are collectively screwed otherwise. Helping the Laggards is one of the most common justifications for the CSF and related efforts. The chart below shows the implicit goal using the same analysis frame as the previous charts.

The implicit goal of the CSF is to move all organizations above the "Lost Cause" line, and most organizations above the Mendoza Line. But also notice that it won't really do much for organizations already close to the State of the Art, and it may not even help most organizations catch up with the Metasploit Line. This goal will be achieved by handing a "fish" to these Laggards, in the form of a static list of practices.

I argue that this isn't likely to happen, even if all the incentives work as hoped. This chart shows what I think will happen:

Message: the CSF will mostly move lagging organizations from the "Lost Cause" region to the below-the-Mendoza Line region, and also move some just above the Mendoza Line. Better than before but still inadequate if we want to improve systemic cyber security.

Here's the final chart in this sequence. It shows what I think we need, and maybe what is possible if we are successful in implementing a performance-oriented framework with strong intrinsic (built-in) incentive systems. This would be equivalent to "...teaching him to fish...".

In case you haven't seen it, here's the intro. post to the framework I'm proposing as an alternative to CSF: Ten Dimensions of Cyber Security Performance.

Let's define the "State of the Art - 2013" as the very best of cyber security performance today, how ever you want to define it (e.g. "CMM-05: Capability Maturity Model level 5"). Maybe it's found in a several companies, or a single organization, or in a composite of organizations.

Now let's consider how long it will take all other organizations, at their current rate of learning and improvement, to reach this "State of the Art - 2013". The chart below is my rough estimate of what the histogram might look like, with buckets for each year behind the state of the art.

The point of this chart is simple -- almost no organizations are at the state of the art, and very very few are even close. The bulk of organizations, large and small, is lagging the state of the art by a large margin.

Now let's consider threat agents and their equivalent of "State of the Art - 2013". This might be defined by nation-state attackers and maybe a few select elite criminal networks. The chart below is my rough estimate of the histogram of the attacker population in terms of how long it will take them to catch up with the current state of the art.

There are three simple points to this slide. First, there are quite a few adversaries that are at or very close to the state of the art, many more than defenders. (For the moment, let's leave aside the NSA as threat actor. I want to focus on adversaries that most commercial and public sector organizations worry about.) Second, the mass of adversaries are not very far from the state of the art, thanks to various Commercial Off-The-Shelf (COTS) solutions and services, including the famous Metasploit tool. The key feature of these solutions and services is that they advance rapidly each year in capability, not just in exploit/attack capabilities but in the full range of capabilities that adversaries might need (e.g. monetization). (This rate of innovation has been called "H.D. Moore's Law" by Josh Corman, named after the inventor and chief engineer of Metasploit.) Essentially, the mass of adversaries are being carried along an innovation trajectory at a brisk pace because they are riding on a vibrant and healthy innovation ecosystem. The same cannot be said for defenders.

The third point is that their is a trailing group of attackers that go after only the "low hanging fruit" among defenders. They only put in enough effort to keep up with the bare minimum level of cyber security that a random organization might achieve. This bare minimum line has been dubbed the "Mendoza Line" by Alex Hutton, after a professional baseball player who, infamously, set a standard for playing in the major leagues a long time with a very low batting average (~.200). Below the Mendoza Line, no ballplayer can hope to say in the Major Leagues, no matter how good his fielding skills are.

Now let's put these two charts together, but this time let's use a log scale for time, so we can see orders of magnitude. The blue line is a continuous (smoothed) version of the histogram from the first chart, above.

|

| Blue line is smoothed histogram of Defenders. |

Here's where the analysis gets controversial because I'm doing some reasoned guessing. Many readers might have different conjectures or guesses. I'm not sure anyone has data to calibrate this, but I'm open to other opinions and guesses.

There are three messages of this chart. First, a large majority of defender organizations are behind the "Metasploit Line", which means that they aren't keeping up with the majority of adversaries. Second, I'd guess that more than half of organizations -- large, medium, and small -- fall below the "Mendoza Line" of bare minimum cyber security. Third, I'd guess that there are a large number of organizations that are so stuck in dysfunction and denial that they aren't making any progress at all toward the "State of the Art - 2013". In other words, at the current rate of improvement and learning, they will never get there. In the BSidesLA presentation, I have a funny GIF and PPTX animation to go along with this, but I haven't yet converted it to a movie yet, so I'll just show four stills. Hopefully, you get the humor and message. (Shout out to @heidishey for stimulating this visual metaphor. Click to see larger version.)

Are they a "Lost Cause"? God, we hope not because if so, we are collectively screwed otherwise. Helping the Laggards is one of the most common justifications for the CSF and related efforts. The chart below shows the implicit goal using the same analysis frame as the previous charts.

The implicit goal of the CSF is to move all organizations above the "Lost Cause" line, and most organizations above the Mendoza Line. But also notice that it won't really do much for organizations already close to the State of the Art, and it may not even help most organizations catch up with the Metasploit Line. This goal will be achieved by handing a "fish" to these Laggards, in the form of a static list of practices.

I argue that this isn't likely to happen, even if all the incentives work as hoped. This chart shows what I think will happen:

Message: the CSF will mostly move lagging organizations from the "Lost Cause" region to the below-the-Mendoza Line region, and also move some just above the Mendoza Line. Better than before but still inadequate if we want to improve systemic cyber security.

Here's the final chart in this sequence. It shows what I think we need, and maybe what is possible if we are successful in implementing a performance-oriented framework with strong intrinsic (built-in) incentive systems. This would be equivalent to "...teaching him to fish...".

In case you haven't seen it, here's the intro. post to the framework I'm proposing as an alternative to CSF: Ten Dimensions of Cyber Security Performance.

What's Wrong #4: If You Eat Your CSF, You'll Get a Cupcake

This post is already long, so I'll leave discussion of incentives to a separate post. But, for completeness and closure, I want to say here that nearly all of the proposed incentives are structured as what economists call "side payments". A side payment operates much like the offer of a sweet treat to your son or daughter if he/she eats their vegetables. This is works for inconsequential, peripheral behaviors but is a poor incentive structure for core behaviors. In the next post, I'll dive into this more, plus maybe a review of the proposed alternative incentives.

Very nice, Russ -

ReplyDeleteI will be attending the NIST workshop in Dallas next week - are you coming?

How about SIRACon?

Best ...

Patrick

Thanks, Patrick. Dallas -- no. SIRAcon -- yes!

DeleteCSF minute waltz: genius.

ReplyDeleteGolf as exec language: bold.

Your continued focus on practical performance-based guidance: priceless.

Please keep it up. Maybe CSF is a 1.0 and Russell Thomas elements can be added over time in a 1.1, 2.0, etc. fashion. ab

Thanks, Andy. I appreciate your consideration and supportive comments.

DeleteAbout the current CSF vs future revisions, I accept that there is a need to get something in place right now that is unobjectionable on it's face, and that's where the CSF is going. I'm old enough to accept that big changes in thinking take time, often a long time. Demming and his methods were largely unknown in the US for decades, but then took industry by storm.

(Funny coincidence: The TV documentary "If Japan can.. why can't we?" that was partly credited for bringing Demming to the US public ran on June 24, 1980, just one week after I joined Hewlett-Packard in Production Engineering -- first job out of college. HP was just beginning on the road to Total Quality Management, and I got to be a part of it.)